The 80/20 Rule Of Getting Good Copy Outputs From Your LLM

How to lead your copywriter into AI without burning hours or losing brand voice.

If your copy team isn’t using AI yet.

You’re the one who has to change that.

Maybe a client started asking. Maybe your management brought it up. Maybe it’s the quiet pressure of every other agency talking about transforming their agency with AI.

The wrong move is rushing in.

The other wrong move is waiting too long.

Here’s the rule that gets it right.

The 80/20 Rule

Most copy teams that try AI without a plan fall into the same loop.

A copywriter writes a prompt. The output comes back generic. They spend an hour editing it back into the brand. They blame the model. They lose trust in the tool. They go back to writing manually, and quietly conclude AI isn’t there yet.

It is. They just walked the model into an empty room.

The agencies getting value from AI right now have figured out one thing.

Eighty per cent of a usable AI output comes from how you’ve set the project up before anyone writes a prompt. Twenty per cent is the edit and the QC.

If the project is set up well, your team starts using AI on day one and produces work that actually sounds like your clients brand. If it isn’t, AI quietly makes the work worse, and your team learns to mistrust the tool before they’ve ever seen it work properly.

For regulated clients — pharma, healthcare, financial services — the stakes are even higher. Quality control isn’t a stage of the work; it’s something you build into the project before anyone writes a prompt.

The job — your job — is to set the project up before they type their first prompt.

Here’s what we’ll cover:

The 80/10/10 split (and what each part is actually doing)

The three layers of context that earn their setup time back

A worked example: an HCP education programme for a pharma client

Where this rule doesn’t apply

The 80/10/10 Split

Three numbers. One sentence each.

80% — project setup. The slow, invisible work. The files, voice rules, templates and reference content the model reads before it generates anything. This is where the time goes up front — and how you get it back.

10% — the prompt. Much smaller than people think. One paragraph. One task, one audience, one constraint, one format. If your prompts are getting longer over time, that’s a signal the project setup isn’t doing enough.

10% — QC and refine. Your internal revision round(s). Your typical agency-client feedback loop.

Most teams operate at zero per cent setup, ninety per cent prompt-and-pray, and ten per cent rescue editing. They’re working with the model untrained, every time. The output reflects it.

The 80%: Three Layers Of Context

Three layers do the heavy lifting. Build all three for a client and the model stops sounding generic.

1. Identity — who the client is, who their audience are, what good looks like (examples)

This is the most-skipped layer. It’s also the one that does the most lifting.

A pharma client targeting cardiologists needs different copy from one targeting GPs. Same indication. Different reader. Different stakes. Different language.

Same goes for a financial services client targeting wealth managers vs retail investors. Or a B2B SaaS client selling to compliance officers vs marketers.

Without this layer, the model defaults to a generic professional voice. Punchy. Aspirational. Empty. And for regulated clients, frequently non-compliant.

With it, the model writes for the actual reader (which means less editing, every time). Six bullet points are usually enough: who they are, who they sell to, the buyer’s job-to-be-done, regulatory context, three competitors and how the client differs, what the client doesn’t want to sound like.

2. Voice rules — banned words, sentence rhythm, examples that nailed it

This is the layer that takes the longest to write. It also saves the most time downstream.

A list of words the client never wants you to use. A list of phrases that flatten their voice. Industry specific terms. A list of ‘banned’ AI words (the giveaways).

The model treats this as a hard filter. Once it knows the client doesn’t say “unlock” or “leverage” or “supercharge”, those words stop appearing. Your copywriter stops circling them in red.

The voice rules file is where most of your editing time goes when you don’t have one.

3. Reference content — what good looks like in practice

The third layer is structure plus pattern.

If making a website (for example) then templates for the page types the client uses: homepage, articles, features, about etc. Each one a skeleton lifted from a page that worked. Hero promise. Three outcomes. Proof. CTA. The model fills the skeleton.

Plus five to ten finished pages: pages that converted well, competitor pages the client likes, anything the team agreed felt right. The model pattern-matches against them and learns the rhythm of the client’s writing without anyone having to explain it.

Worked Example: An HCP Education Programme For A Pharma Client

Your agency is working on a new website for a pharma client. The brief: a new HCP-facing education hub for their lead product, ahead of an indication update. Eight pages. Mechanism of action, dosing guidance, two patient-type pages, two efficacy pages, an FAQ, and a prescriber resources page.

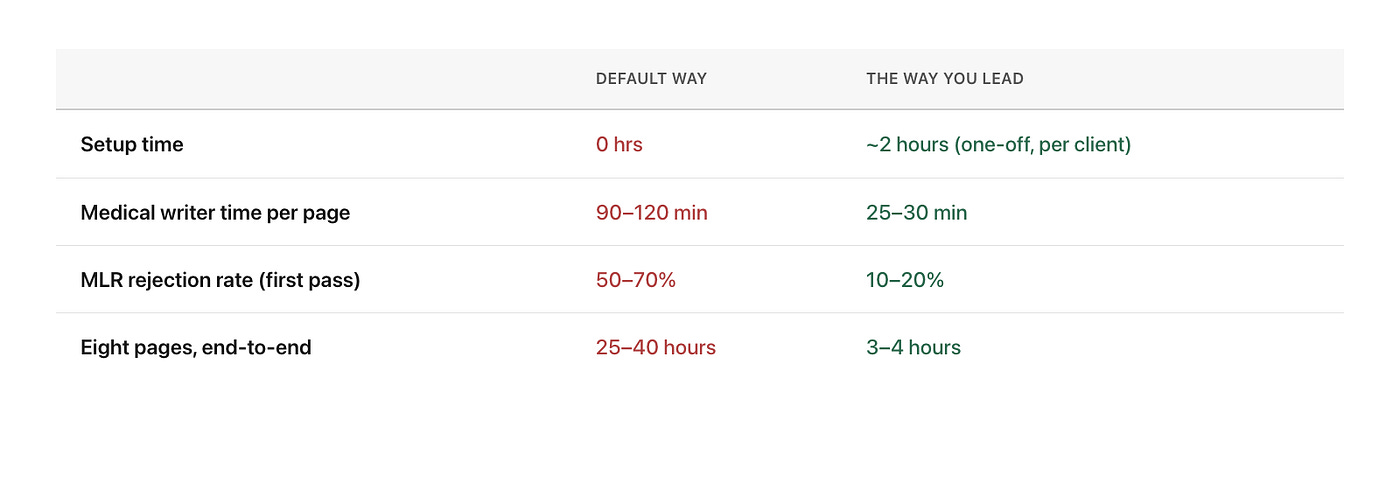

Two ways to bring AI into this project.

The default way (what most agencies do). A copywriter pastes each page brief into the chat. Asks for “clear, professional HCP copy”. Gets generic output back — promotional language where it shouldn’t be, claims drifting outside the indication, no fair balance, “patients” used loosely where the indication says “adults with X”.

A medical writer spends two hours per page reworking it. Then your team rejects half on the first pass anyway, because the model invented evidence claims that don’t sit in the trial data. By page three, the medical writer is faster doing it longhand.

Quick interlude: Markdown (.md) files

LLMs love markdown files. They’re the easiest and most efficient (i.e. your AI tokens) way for the LLM to read a piece of content. Word docs are ok, and PDFs a close second, as long as everything is rendered as editable text. But you’ll get the best results from using Markdown.

The way you’ll lead them through. Spend a few hours building four files for your client, before anyone on the team types a single prompt.

client-context.md— the product, the indication (in its exact approved wording), the HCP audience (specialists vs primary care, the typical clinical question they’re answering), the regulatory framework (ABPI, PMCPA, MHRA), three competitor products and how this one differs, and the MLR review process with known reviewer preferences.brand-voice.md— register rules (peer-to-peer, evidence-led, no promotional superlatives), banned claim patterns (”first-line”, “best-in-class”, “gold standard” — none of these unless the data explicitly supports them), required hedging (”may reduce”, not “reduces”; “in the X trial, Y was observed”), reading-level guidance, and five to ten example sentences from past MLR-approved work.page-templates.md— page skeletons that meet layout requirements. PI placement. Where safety information sits relative to efficacy. Reference list at the bottom. Required footnotes. Fair balance built into the structure, so the model can’t accidentally write an efficacy page without the safety context.reference-pages.md— five to ten MLR-approved pages from past work or the client’s existing compliant content, plus a claim library: every approved efficacy claim with the trial reference and the exact wording that passed review.

Then the prompt for each page becomes one sentence.

“Draft the mechanism-of-action page for [product], following the brand voice and template. Use only the claim library for efficacy statements.”

The output lands close enough that a medical writer’s pass takes 25 to 30 minutes per page, not two hours. And — far more importantly — you get the work out the door in half the time, because the model isn’t inventing evidence or drifting outside the indication.

Here’s where the time goes:

Now look across the next twelve months. The same client commissions another two campaigns: a new patient-type, congress materials, an HCP webinar deck. The four files don’t need rebuilding. Every new piece of work feeds back into the claim library and the reference pages. The brand voice gets more consistent. QC gets easier (and quicker). And the team’s first experience of AI is one where it works inside the rules, not against them.

The 10% Prompt + 10% QC and refine

Remember.

The prompt. One paragraph. The task, the audience, the constraint, the format. That’s it.

The review. One revision round. For regulated clients, this is also where quality control and compliance get checked against the rules baked into setup.

If setup did its job, that check is verification, not rework. If you’re going past one round consistently, look upstream. The project setup is where the time should go.

When it comes to the human aspects such as a brief being wrong, people changing their minds on the direction, same on the client-side, the review might feel slightly idealistic, but you get my point.

When This Doesn’t Pay Off

The 80/20 rule has limits. Some projects don’t earn the upfront investment back.

One-off tasks. A single landing page for a one-off client may not justify the setup.

Highly creative conceptual work. AI is a finisher there, not a starter — constraints kill the spark.

Clients you’ll never work with again. The setup doesn’t amortise across one engagement.

The rule of thumb: if you’ll write more than five pieces of copy for your client, build the context. Otherwise, prompt and edit.

Move The Time To The Front

Most copy teams adopting AI right now are doing it badly. They’re working with a model that doesn’t have the right context. The output’s generic. Confidence drops. The team goes back to grinding hours.

That doesn’t have to be your experience.

You’re the one who decides how AI lands. Move the time to the front. Build the project, not the prompt. Your team gets AI that actually assists from day one. Your clients get copy that’s on brand and in half the time. And you skip come away feeling like you’ve just transformed the process like it’s 2035.

You don’t have to be ahead of every AI development. You just have to lead this one well.

And this this was userful, send it to a writer in your business who could benefit from learning more AI skills.

Speak soon, Tim